ERP Success Is Not Binary. It Is a Spectrum

Two years into an ERP program, a report reaches the executive table stating the project was delivered on time, within budget, and successfully went live. On paper, it is a success.

Inside the organisation, a different reality is emerging. Workarounds are increasing. Reporting remains unreliable. Teams are frustrated. The expected value is unclear.

This gap between what is reported and what is experienced is common. It is not primarily an execution issue. It is a definition issue.

The Old Thinking: Success as a Binary Outcome

Most ERP programs are governed by a simple model:

Delivered equals success. Not delivered equals failure.

This model is appealing because it is easy to communicate, easy to defend, and easy to close. However, it is fundamentally flawed.

ERP is not just a project. It is a shift in organisational capability. Capability cannot be reduced to a binary outcome.

The Reality: Success Is Multi-Dimensional

To understand this, consider how success is defined outside of business systems. No one evaluates personal success based on a single measure such as income or fitness. It is understood as a combination of multiple factors, each contributing differently depending on the individual.

ERP success follows the same principle.

It is not one outcome. It is a position across a range of capabilities.

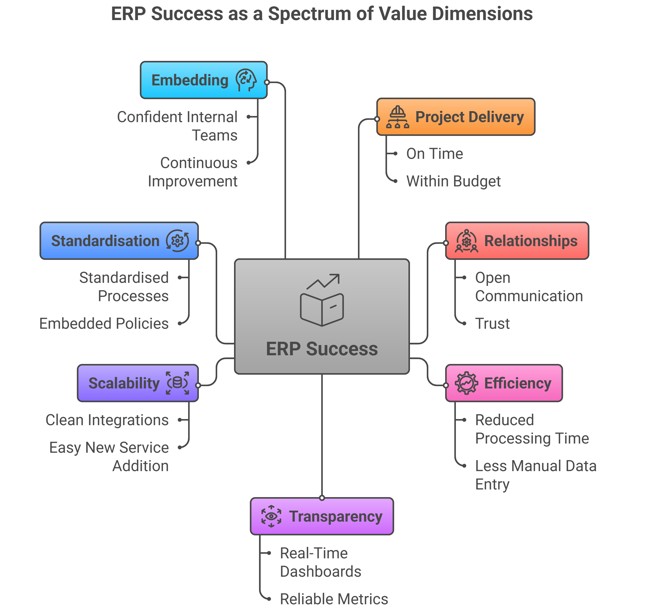

A Practical Model: Measuring ERP Success as a Spectrum

A more useful way to define ERP success is to assess it across a set of value dimensions, each representing a different aspect of organisational capability. These dimensions together form a spectrum. Where the organisation sits across them determines the real outcome of the ERP program.

1. Project Delivery

This dimension assesses whether the program was executed in a controlled and disciplined manner.

Example:

The ERP goes live within approved budget and timeline, with no major operational disruption. Business users are able to perform core functions from day one, and there is no immediate fallback to legacy systems.

Contrast:

A system goes live “on time,” but key processes (e.g. payroll or procurement) require manual intervention for weeks. Technically delivered, but operationally unstable.

2. Relationships

This reflects whether the program built or eroded trust across stakeholders.

Example:

Business, IT, and the vendor maintain open communication. Issues are surfaced early. Stakeholders feel heard, and there is confidence in decision-making.

Contrast:

Escalations increase. Business blames IT, IT blames the vendor, and the vendor defends scope. Meetings become defensive rather than collaborative. Trust declines even if milestones are met.

3. Efficiency

This measures whether the ERP has improved how work is done.

Example:

Invoice processing time reduces from 10 days to 3 days within six months. Manual data entry is reduced significantly. Staff spend less time reconciling errors.

Contrast:

The system is live, but processes take the same time or longer. Users create spreadsheets outside the system to complete tasks. Efficiency gains are not realised.

4. Transparency

This assesses whether leadership now has better visibility of the organisation.

Example:

Executives can access real-time financial dashboards. Budget vs actuals are visible daily. Operational metrics are reliable and consistent across departments.

Contrast:

Reports still require manual consolidation. Different departments produce conflicting numbers. Decision-making is delayed due to lack of trust in data.

5. Scalability

This determines whether the system can support future growth and change.

Example:

The ERP integrates cleanly with CRM, asset systems, and Microsoft tools. New services or business units can be added without significant rework.

Contrast:

Every new requirement requires customisation. Integrations are fragile. The organisation becomes dependent on external vendors for even minor changes.

6. Standardisation

This evaluates whether the organisation is operating in a consistent and controlled way.

Example:

Procurement processes are standardised across departments. Policies are embedded in the system. Variations are minimal and intentional.

Contrast:

Each department uses the system differently. Processes vary widely. Workarounds are common. The ERP reflects existing inconsistency rather than resolving it.

7. Embedding

This measures whether the change has been sustained and internalised.

Example:

Internal teams are confident using the system. Continuous improvement initiatives are led by the business. Reliance on external consultants reduces over time.

Contrast:

Months after go-live, the organisation still depends heavily on vendors or contractors. Knowledge remains external. Improvements stall.

The Combined View

Individually, each dimension provides a partial view. Together, they reveal the true position of the organisation.

For example:

- Strong delivery but weak embedding → short-term success, long-term risk

- High transparency but low standardisation → better visibility, but inconsistent execution

- Good relationships but poor efficiency → collaborative environment, limited value realisation

This is why ERP success cannot be reduced to a single outcome.

It must be understood as a profile across multiple dimensions, each moving at a different pace.

This model allows executives to see what is actually happening beneath the surface—and where intervention is required before issues become systemic.

The Shift: From Outcome to Position

When viewed through these dimensions, success is no longer a simple yes or no decision. It becomes a position across a spectrum.

An organisation may have strong project delivery but weak adoption, good relationships but poor data visibility, or efficient processes that do not scale. This is the real picture of ERP performance.

This perspective explains why many programs that are formally declared successful still feel like failures in practice.

Why This Matters at the Executive Level

At the executive level, the issue is not a lack of effort or intent. It is a limited lens through which success is defined and assessed.

This creates systemic problems. Programs are declared successful too early. Decisions are made based on delivery milestones rather than capability outcomes. Risks related to adoption, data, and operations remain invisible until they become critical.

In practical terms, the organisation cannot see what actually matters.

A More Useful Question

Instead of asking:

Is the ERP project successful?

Ask:

Where are we on the spectrum of capability across these dimensions?

This shift changes what is measured, what is discussed, and what is prioritised.

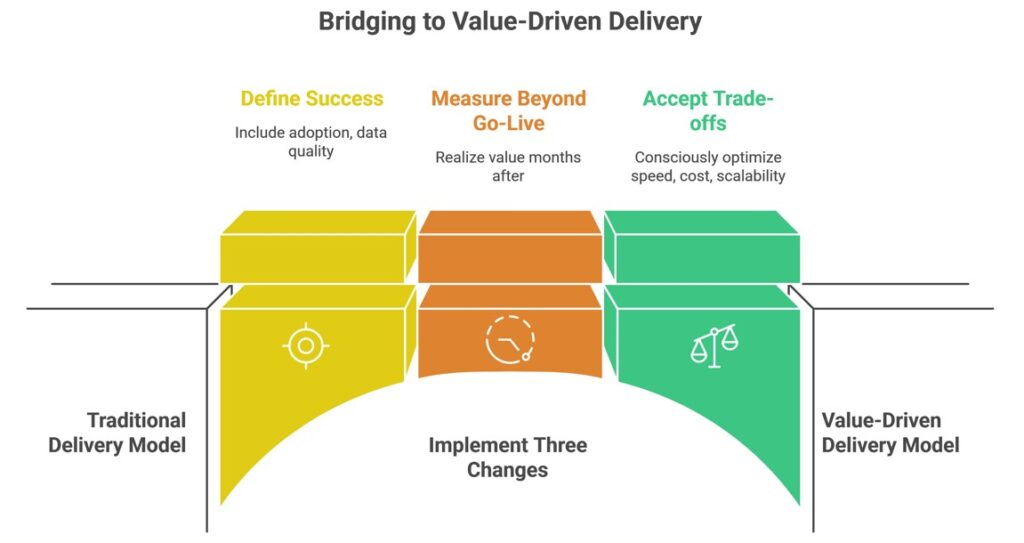

The Bridge: What Executives Must Do Differently

To move from the traditional model to this approach, three changes are required.

First, define success before delivery. This must go beyond time, cost, and scope to include adoption, data quality, process consistency, and operational outcomes.

Second, measure beyond go-live. Most value is realised months after implementation, not at the point of deployment.

Third, accept trade-offs. Speed, cost, scalability, and standardisation cannot all be optimised simultaneously. These decisions must be made consciously at the leadership level.

The Insight

ERP success is not something achieved at a point in time. It is something the organisation moves towards over time.

Without a structured way to understand that movement, organisations fall back on simplified reporting, misleading narratives, and repeated mistakes.

The Next Step

Most organisations do not struggle with ERP because of technology or vendors. They struggle because they have not clearly defined what success means.

If this perspective resonates, the next step is to define your success dimensions, assess your current position across them, and identify where the gaps exist.

That is where real governance begins.

Email us at info@bhaniconsulting.com if you’d like to learn more.