Why most organisations measure the wrong thing—and don’t realise it

The Comfortable Story Every Executive Hears

Two years into an ERP program, the update sounds familiar:

- The system has gone live

- The project stayed within budget

- Key milestones were achieved

- Stakeholders are broadly satisfied

On paper, this is success.

Boards are reassured.

Steering committees close.

Teams move on.

But beneath that narrative, a quieter question remains—rarely asked:

Did the organisation actually become better?

The Question We Avoid

Most organisations spend time defining what success looks like.

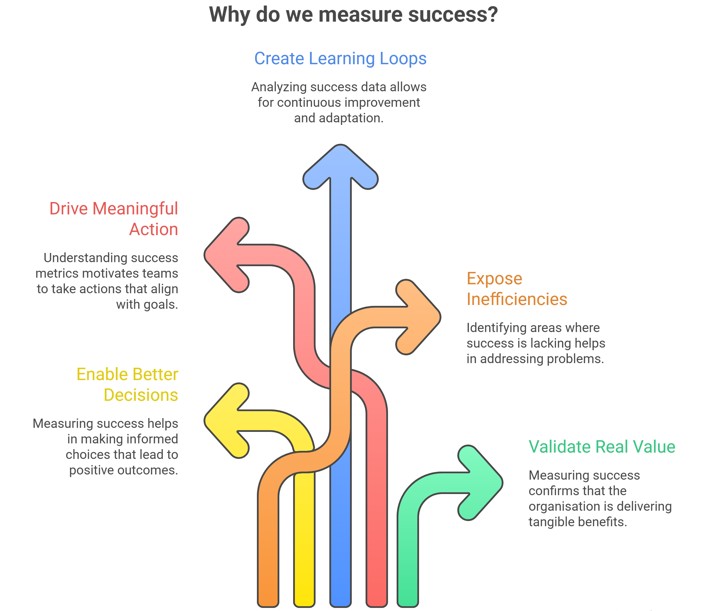

Very few ask:

Why do we measure success in the first place?

This is not a philosophical question. It is structural.

Because the purpose of measuring success is not reporting.

It is not governance formality.

It is not reassurance.

It is to:

- Enable better decisions

- Drive meaningful action

- Create learning loops

- Expose inefficiencies

- Validate real value

In simple terms:

We measure success to improve the organisation.

Where It Breaks

Now consider how success is actually measured in most ERP programs:

- Delivered on time

- Delivered within budget

- Scope achieved

- Go-live completed

These measures are widely accepted. Rarely challenged.

But they do not serve the purpose above.

They do not tell you:

- Whether decision-making improved

- Whether inefficiencies were removed

- Whether capability increased

- Whether value was actually realised

Instead, they answer a different question:

“Was the project delivered?”

Not:

“Did the organisation improve?”

The Executive Success Distortion

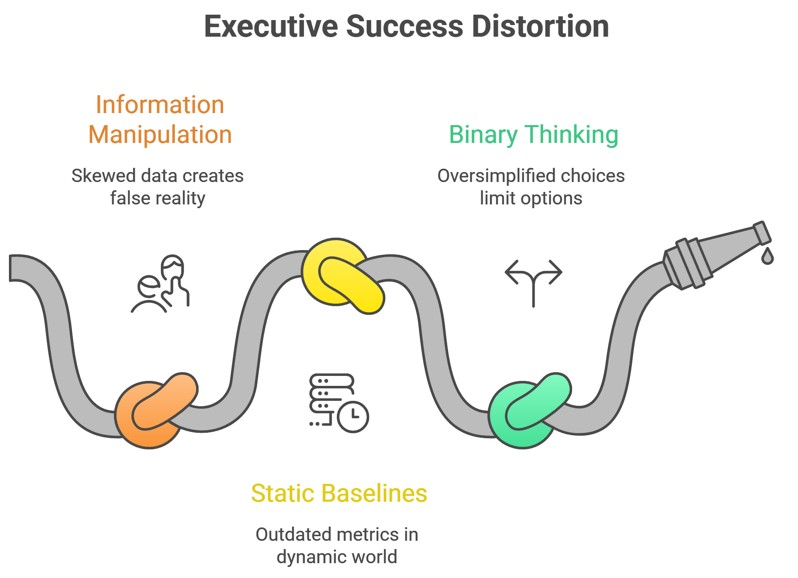

This creates a structural condition:

Success is declared based on perception, not discovered through evidence.

Three forces reinforce this distortion.

1. Manipulation of Information and Narrative

No one sets out to mislead.

But systems and incentives shape behaviour.

- Reports highlight what is progressing well

- Risks are softened or contextualised

- Metrics are selected to support a positive narrative

Over time:

- Green dashboards replace real insight

- Issues become “managed” rather than solved

- Success becomes a story the organisation agrees to believe

2. Static Baselines in a Dynamic Reality

At the start of the program:

- Business case is defined

- Budget is approved

- Timelines are locked

At that point, understanding is limited.

Yet those early assumptions become:

- Fixed benchmarks

- Anchors for success

- The reference point for judgement

The problem is simple:

The organisation learns more as the program progresses—but continues to measure success against what it knew at the start.

Reality evolves.

Measurement does not.

3. Binary Thinking

Success is treated as:

- Success / Failure

- Delivered / Not delivered

This simplifies reporting.

But it removes meaning.

Because organisational improvement is never binary.

It is gradual, uneven, and contextual.

The Shift: Success Is a Spectrum

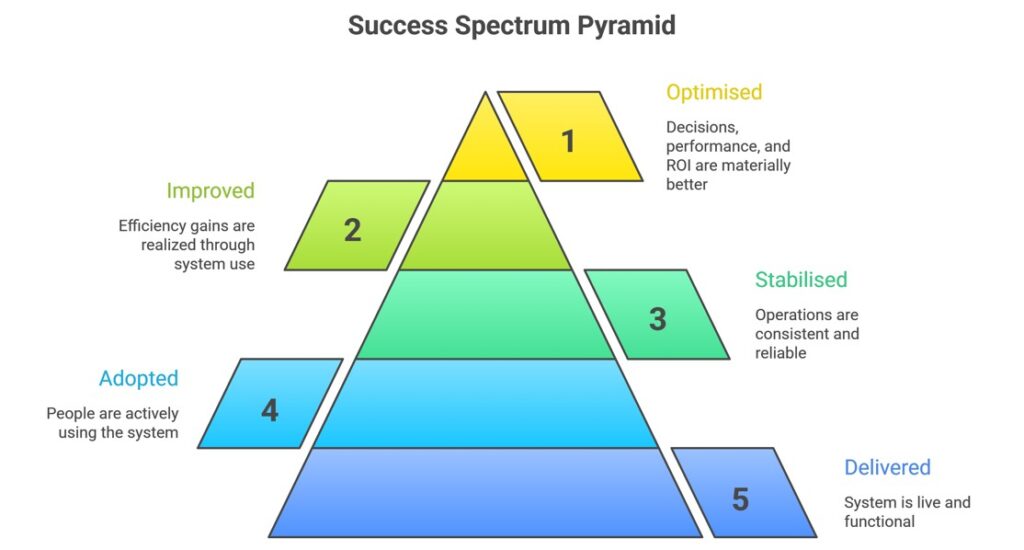

If the purpose of measurement is improvement, then success must be measured as a degree, not a state.

A more accurate view looks like this:

Most organisations stop at base levels 4 and 5—and declare success.

Very few reach Level 1 or 2.

Fewer still measure it.

The Business Case Illusion

Executives often rely on the business case as the ultimate benchmark.

But consider this:

The business case is not a truth.

It is an early hypothesis.

It is built when:

- Information is incomplete

- complexity is underestimated

- organisational behaviour is not fully understood

Yet it becomes:

- The anchor for accountability

- The justification for decisions

- The definition of success

This creates a subtle but critical issue:

The organisation becomes accountable to its initial assumptions—rather than to actual outcomes.

What This Means for You

If success is misdefined:

- Governance cannot function properly

- Reporting cannot reveal truth

- Decisions cannot be optimised

- Capability cannot develop

What remains is:

- Delivery without transformation

- Stability without improvement

- Confidence without evidence

How to Recognise It Early

You are likely operating in this condition if:

- Reports are consistently positive, but operational pain persists

- Go-live is treated as the finish line

- Benefits are assumed rather than measured

- The business case remains unchanged despite evolving scope

- There is no visibility of decision quality or rework

- Success is discussed in milestones, not outcomes

These are not project issues.

They are measurement design failures at the executive level.

The Reframe

ERP is not a technology project.

It is a mechanism to:

- Understand how the organisation works

- Improve how decisions are made

- Redesign how value is created

If success measurement does not support this:

The system may be delivered—but the organisation remains unchanged.

A Different Standard

The question is not:

“Did we deliver the ERP?”

It is:

“To what extent are we now better than before?”

Better in:

- decision-making

- efficiency

- clarity

- performance

- value creation

This requires:

- Dynamic measurement

- Honest reporting

- Continuous recalibration of success

Final Thought

Most ERP programs do not fail because they miss targets.

They fail because the targets were never designed to reveal the truth.

Until that changes, success will continue to be declared—

without improvement ever being realised.

Why most organisations measure the wrong thing—and don’t realise it

without improvement ever being realised.